Mad at the Polls? A Local Pollster Explains What Might’ve Happened

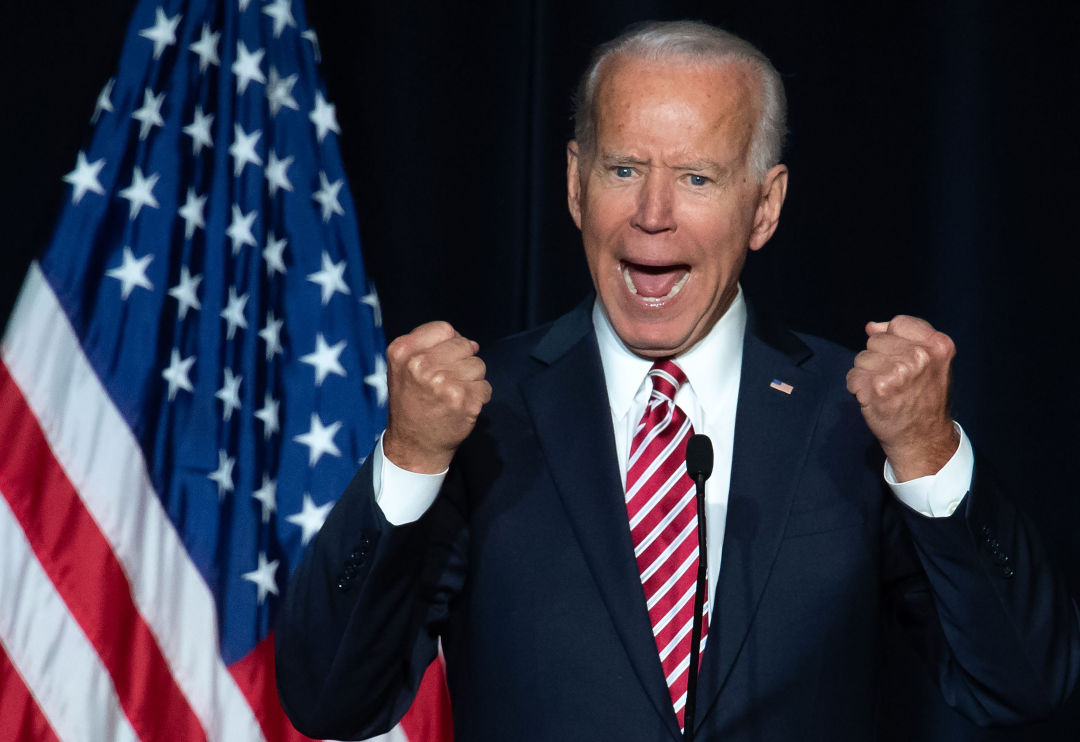

This is how we've felt all week.

Image: Shutterstock By Christos S

Everyone’s mad at the polls. Or at least it felt that way on Tuesday night. After Donald Trump comfortably triumphed in two swing states, Joe Biden backers had flashbacks to 2016, when Democrat Hillary Clinton underperformed political experts’ projections. How could the polls be so wrong again? Dems grumbled. They weren’t alone; Trump supporters were also peeved. Their candidate’s claims of “fake” numbers seemed justified after the Republican leader outperformed the national polling average in Ohio by seven points.

The narrative of faulty forecasting has weakened since Election Day, as mail-in votes in toss-up states like Pennsylvania and Arizona have brought Biden vote counts in line with national polling averages during the run-up to the election. But after all the votes are finally tallied, there’s still going to be some level of inaccuracy that margin of error can’t explain away, notes longtime Washington pollster Stuart Elway. “It is true that they seem to have overestimated Biden,” Elway told me by phone on Thursday. “So there's something going on here for the second cycle in a row.”

Elway offers a theory for this statistical shortcoming. When the Elway Research founder began his polling career in 1975—when the Seattle legislative delegation “was mostly Republican” and “dinosaurs still roamed the earth,” Elway quips—the response rate to his nascent firm’s phone interviews was somewhere between 40 and 60 percent. “Now it’s more like 3 and 4 percent,” says Elway, whose recent collaboration with Crosscut has yielded survey results on defunding the police and the gubernatorial race.

To be clear: No serious pollster in 2020 solely relies on voters answering calls from weird numbers. Elway’s own operation runs the gamut of outreach methods, using text messages, calls to landlines and cell phones, automated phone interviews, live interviews, and even mail to fetch responses from the populace. Pollsters also increasingly enlist firms like Bothell-based L2 to collect and maintain random representative samples of the population for political and consumer surveys, which expedites the process.

Still, with response rates so low and a candidate, Trump, who encourages his supporters to distrust institutions, “it seems possible that those folks are not going to answer a poll,” says Elway. “So you might get a non-college white male on your survey, and you might have to statistically weight his results, because they're under-represented, but you're still talking to a white non-college person who answered the poll. You can't say anything about people who didn't answer the poll.” Elway also notes that voter suppression could play a role in the discrepancy between polls and actual results; a deep data dive post-election beckons.

Regardless of whether these factors contributed to the past two elections' surprising results, it’s important to understand two polling fundamentals before you launch into your next comment-section rant. One is the aforementioned margin of error, or the amount that a survey result likely differs from the true number. As Pew Research helpfully explains: “A margin of error of plus or minus 3 percentage points at the 95% confidence level means that if we fielded the same survey 100 times, we would expect the result to be within 3 percentage points of the true population value 95 of those times.”

A 3-percent margin of error may not sound like a lot, but in a tight election, that can explain one candidate winning a state, or states, instead of the other. Results that fall outside the margin of error—like Trump’s wins in Ohio and Iowa, as well as his close loss in Wisconsin—are thus fair to question.

Also, it's important to remember that a model is not a poll. Many people habitually refresh Nate Silver’s FiveThirtyEight predictions—Elway says he checks the site every 20 minutes during the election—and the oft-maligned New York Times needles. But while both of these forecasts incorporate polling averages, they also include other data in their projections. FiveThirtyEight even grades different polls (Elway Research consistently earns high marks). So when one of these models says a candidate has a 90 percent chance of winning a state, that’s not necessarily what the polls suggest, or even imply at all. “Pollsters will tell you, ‘We're not predicting. Polls are not designed to predict. Polls are a snapshot in time,’” says Elway.

He understands that it’s hard for voters to refrain from making that leap. “The developing narrative now is the polls are the big losers again,” he says, “so that's going to be a hard narrative to combat, and it's not without merit.”

He certainly doesn’t want to absolve his industry of any blame. Though he didn’t conduct any polling for the 2020 general election (not worth the time, he says, given the likely Democratic landslide in Washington), he believes pollsters need to adjust to the “systematic” shortcomings apparent in the last two election cycles.

But can polling overcome this latest round of backlash? Elway thinks so. "It's not taking any more of a bashing now than it did in 1948," he says. A picture of the infamous headline, "Dewey Defeats Truman," hangs from one of his walls. "The methodology will continue to evolve."